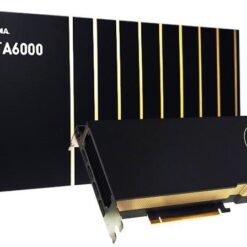

Unprecedented Performance, Scalability, and Security for Every Data Center

The NVIDIA® H100 Tensor Core GPU enables an order-of-magnitude leap for large-scale AI and HPC with unprecedented performance, scalability, and security for every data center and includes the NVIDIA AI Enterprise software suite to streamline AI development and deployment. H100 accelerates exascale scale workloads with a dedicated Transformer Engine for trillion parameter language models. For small jobs, H100 can be partitioned down to right-sized Multi-Instance GPU (MIG) partitions. With Hopper Confidential Computing, this scalable compute power can secure sensitive applications on shared data center infrastructure. The inclusion of NVIDIA AI Enterprise with H100 PCIe purchases reduces time to development and simplifies deployment of AI workloads, and makes H100 the most powerful end-to-end AI and HPC data center platform.

The NVIDIA Hopper architecture delivers unprecedented performance, scalability and security to every data center. Hopper builds upon prior generations from new compute core capabilities, such as the Transformer Engine, to faster networking to power the data center with an order of magnitude speedup over the prior generation. NVIDIA NVLink supports ultra-high bandwidth and extremely low latency between two H100 boards, and supports memory pooling and performance scaling (application support required). Second-generation MIG securely partitions the GPU into isolated right-size instances to maximize QoS (quality of service) for 7x more secured tenants. The inclusion of NVIDIA AI Enterprise (exclusive to the H100 PCIe), a software suite that optimizes the development and deployment of accelerated AI workflows, maximizes performance through these new H100 architectural innovations. These technology breakthroughs fuel the H100 Tensor Core GPU – the world’s most

advanced GPU ever built.

| FP64 | 26 teraFLOPS |

| FP64 Tensor Core | 51 teraFLOPS |

| FP32 | 51 teraFLOPS |

| TF32 Tensor Core | 756teraFLOPS* |

| BFLOAT16 Tensor Core | 1,513 teraFLOPS* |

| FP16 Tensor Core | 1,513 teraFLOPS* |

| FP8 Tensor Core | 3,026 teraFLOPS* |

| INT8 Tensor Core | 3,026 TOPS* |

| GPU memory | 80GB |

| GPU memory bandwidth | 2TB/s |

| Decoders | 7 NVDEC 7 JPEG |

| Max thermal design power (TDP) | 300-350W (configurable) |

| Multi-Instance GPUs | Up to 7 MIGS @ 10GB each |

| Form factor | PCIe Dual-slot air-cooled |

| Interconnect | NVLINK: 600GB/s PCIe Gen5: 128GB/s |

| Server options | Partner and NVIDIA-Certified Systems with 1–8 GPUs |

| NVIDIA AI Enterprise | Included |

| Dimensions | 267 × 111 × 40 mm |

|---|---|

| Graphics processor | H100 |

| Brand | NVIDIA |

Be the first to review “NVIDIA H100 Tensor Core GPU 80GB” Cancel reply

Related products

Graphics Cards

Graphics Cards

Graphics Cards

![ASUS Zenbook Duo 14 UX482 14" Full HD Touchscreen Laptop (512GB) [Intel i7]](https://ausale.au/wp-content/uploads/2022/05/511078-Product-0-247x247.jpg)

![Alternative view of ASUS Zenbook Duo 14 UX482 14" Full HD Touchscreen Laptop (512GB) [Intel i7]](https://ausale.au/wp-content/uploads/2022/05/511078-Product-1-247x247.jpg)

Reviews

There are no reviews yet.